Introducing BYOLLM!

Or, How You Bring Your Own LLM to Wave

What is BYOLLM?

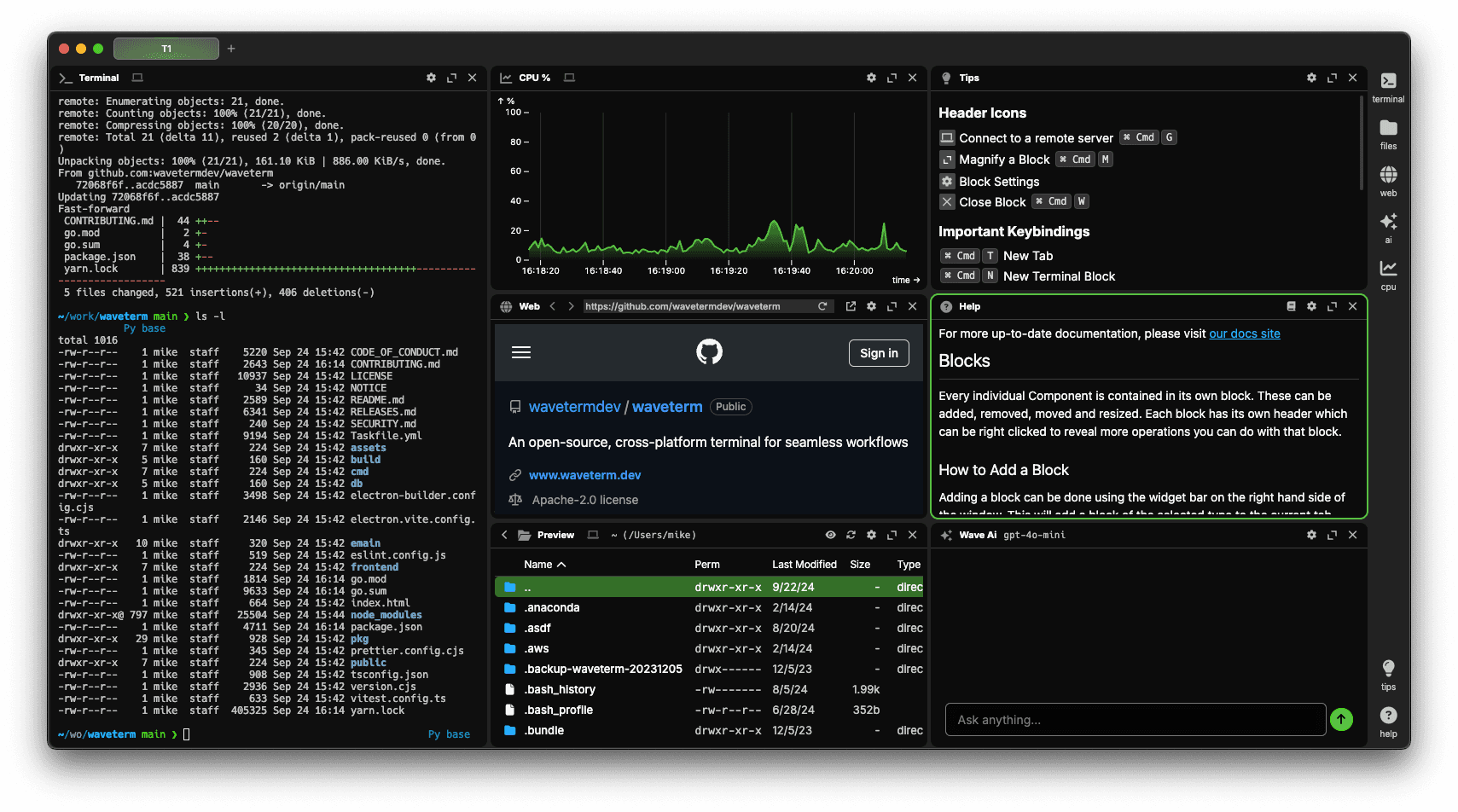

At Wave, we wanted to ensure that users have a choice around whatever AI tools they bring to Wave, not just bolt on the latest AI trend. That's why we’re excited to announce the introduction of BYOLLM, or "Bring Your Own Large Language Model." Whether it be for security, privacy, or even ethical reasons, this new feature enables users to use whatever LLM provider they like, right inside of Wave.

At the moment, Wave supports four local LLM providers — Ollama, LM Studio, llama.cpp, and LocalAI — with the ability to bring your own, OpenAI API-compatible (local or cloud-based) LLM provider if it's not officially supported. Each of these providers supports a wide range of models, essentially allowing users to bring hundreds of LLMs to their computers through this integration.

Getting Started with Local LLMs

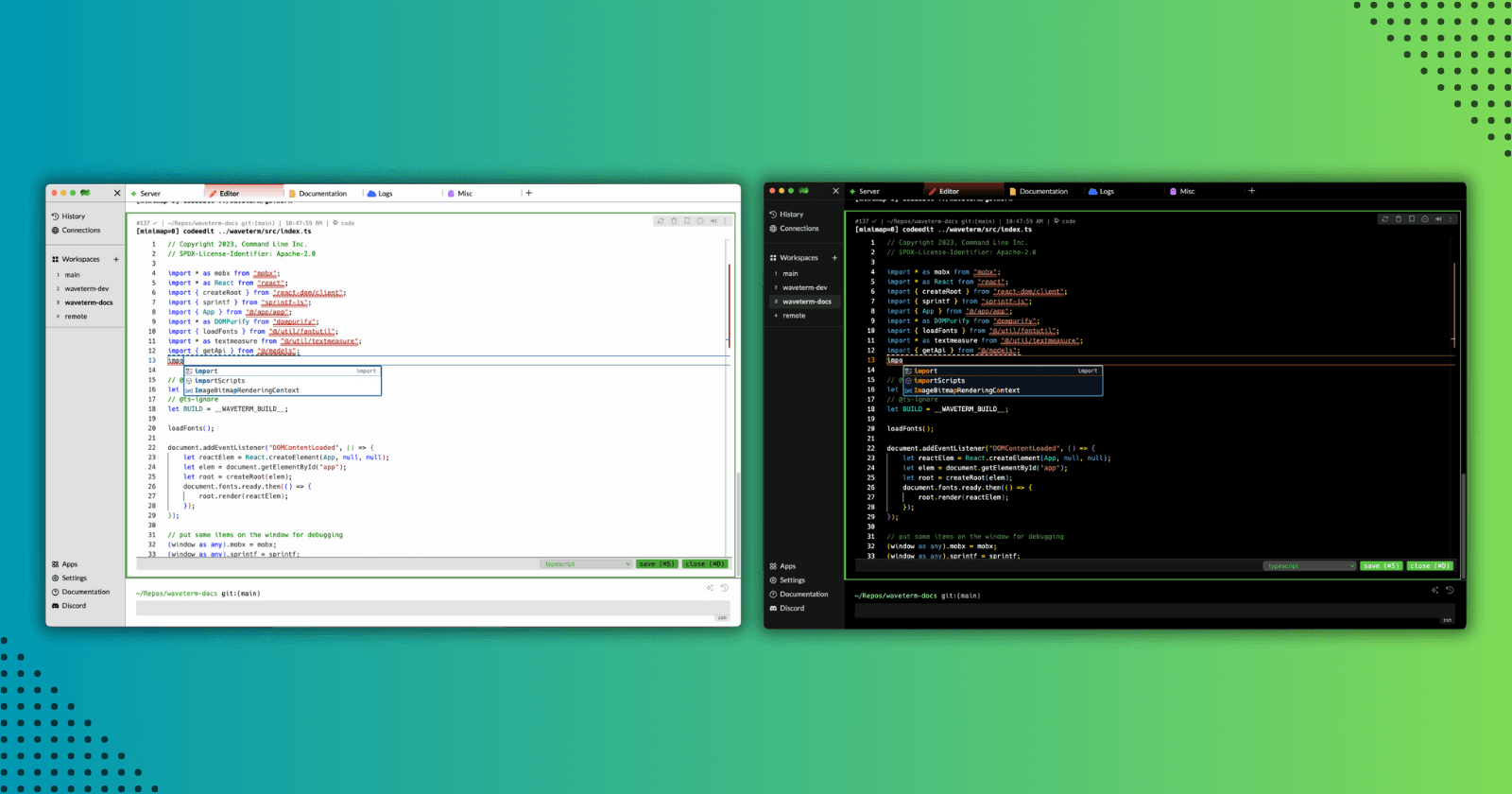

Setting up your own LLM in Wave is a simple process. Start by checking out our supported LLM providers, and from there select your LLM provider and follow the instructions. Configuration usually consists of setting just two parameters for local models: aibaseurl and aimodel. These parameters can be set either through the UI or from the command line, making it easy for users to get started with their preferred LLM.

For example, to configure Ollama, simply navigate to the "Settings" menu and set the AI Base URL to http://localhost:11434/v1 and the AI Model to any model you've downloaded using Ollama. In this example, we're using mistral.

Once configured, you can start using your LLM in Wave. Simply ask a question using the command box (or use the /chat command), such as "How do I build a container from a dockerfile?", and Wave AI will provide an answer using your selected LLM.

The configuration process is similar for all supported LLM providers but varies slightly. Be sure to check out the detailed documentation for each provider to learn about their specific options and requirements.

Getting Started with Cloud-based LLM's

At current, Wave only supports OpenAI API-compatible LLMs. However, if you or your team has an account with OpenAI you can easily configure Wave to use GPT-4 (or the newly released GPT-4o) and other fine-tuned models for added flexibility, privacy, and to circumnavigate our current rate limit of 200 requests per day.

We’re also currently testing Perplexity, as it's an OpenAI-compatible cloud-based model that many users have requested. Make sure you follow our social media (X and LinkedIn) pages to be notified when we announce its support.

The Future of BYOLLM

In the coming weeks and months, we hope to expand our BYOLLM offerings to include support for more commercial cloud-based models like Google's Gemini, Anthropic's Claude, and more to ensure that you have access to the latest trends in LLMs, and can tailor your AI experience to your specific needs and concerns.

Lastly, we’re committed to expanding how WaveAI is surfaced in the product to make it more useful. At the moment, Wave AI is limited to the command box and /chat command, but we are currently developing more ways to use AI for various tasks like troubleshooting, autocomplete, and much more. We think the terminal has a lot to gain from the latest trends in AI, and are excited to see how it can transform the terminal experience.

Wrapping Up

We couldn't be more excited about BYOLLM, and the future of our AI integrations to come. Download Wave today to try our latest integrations and experience the power of BYOLLM firsthand — we can't wait to see what you'll create!

Also, feel free to join the Wave community on Discord if you need assistance setting up one of these integrations, want to suggest additional LLMs for future inclusion, or simply wish to connect with other Wave users.